ACCV 2007 – Exploiting Inter-Frame Correlation for Fast Video to Reference Image Alignment

Arif Mahmood, Sohaib Khan

8th Asian Conference on Computer Vision, ACCV 2007, Tokyo Japan

Abstract

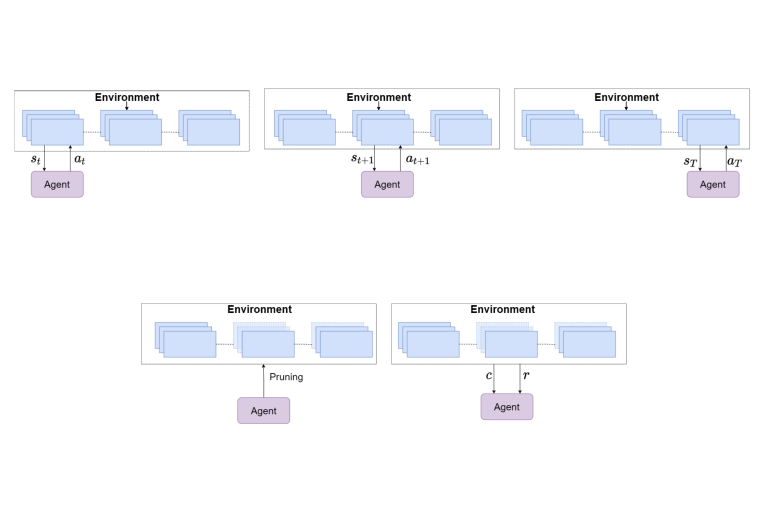

Strong temporal correlation between adjacent frames of a video signal has been successfully exploited in standard video compression algorithms. In this work, we show that the temporal correlation in a video signal can also be used for fast video to reference image alignment. To this end, we first divide the input video sequence into groups of pictures (GOPs). Then for each GOP, only one frame is completely correlated with the reference image, while for the remaining frames, upper and lower bounds on the correlation coefficient (ρ) are calculated. These newly proposed bounds are significantly tighter than the exist- ing Cauchy-Schwartz inequality based bounds on ρ. These bounds are used to eliminate majority of the search locations and thus resulting in significant speedup, without effecting the value or location of the global maxima. In our experiments, up to 80% search locations are found to be eliminated and the speedup is up to five times the FFT based implemen- tation and up to seven times the spatial domain techniques.

Resources

Download PDF

(Distributed here for timely dissemination of scholarly work. Copyright retained by copyright holders. May not be posted without permission from copyright holders.)

Text Reference:

Arif Mahmood, Sohaib Khan, "Exploiting inter-frame correlation for fast video to reference image alignment" in Proceedings of 8th Asian Conference on Computer Vision, ACCV 2008, Tokyo Japan, pp. 647-656, November 2007

Bibtex Reference:

@article{mahmood2007exploiting,

title={Exploiting inter-frame correlation for fast video to reference image alignment},

author={Mahmood, A. and Khan, S.},

journal={Computer Vision--ACCV 2007},

pages={647--656},

year={2007},

publisher={Springer}

}