ICPR2022 – Neural Network Pruning Through Constrained Reinforcement Learning

Shehryar Malik, Muhammad Umair Haider*, Omer Iqbal, Murtaza Taj

Abstract:

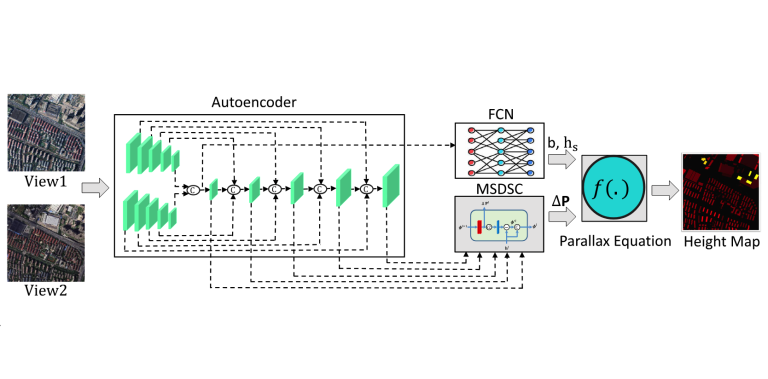

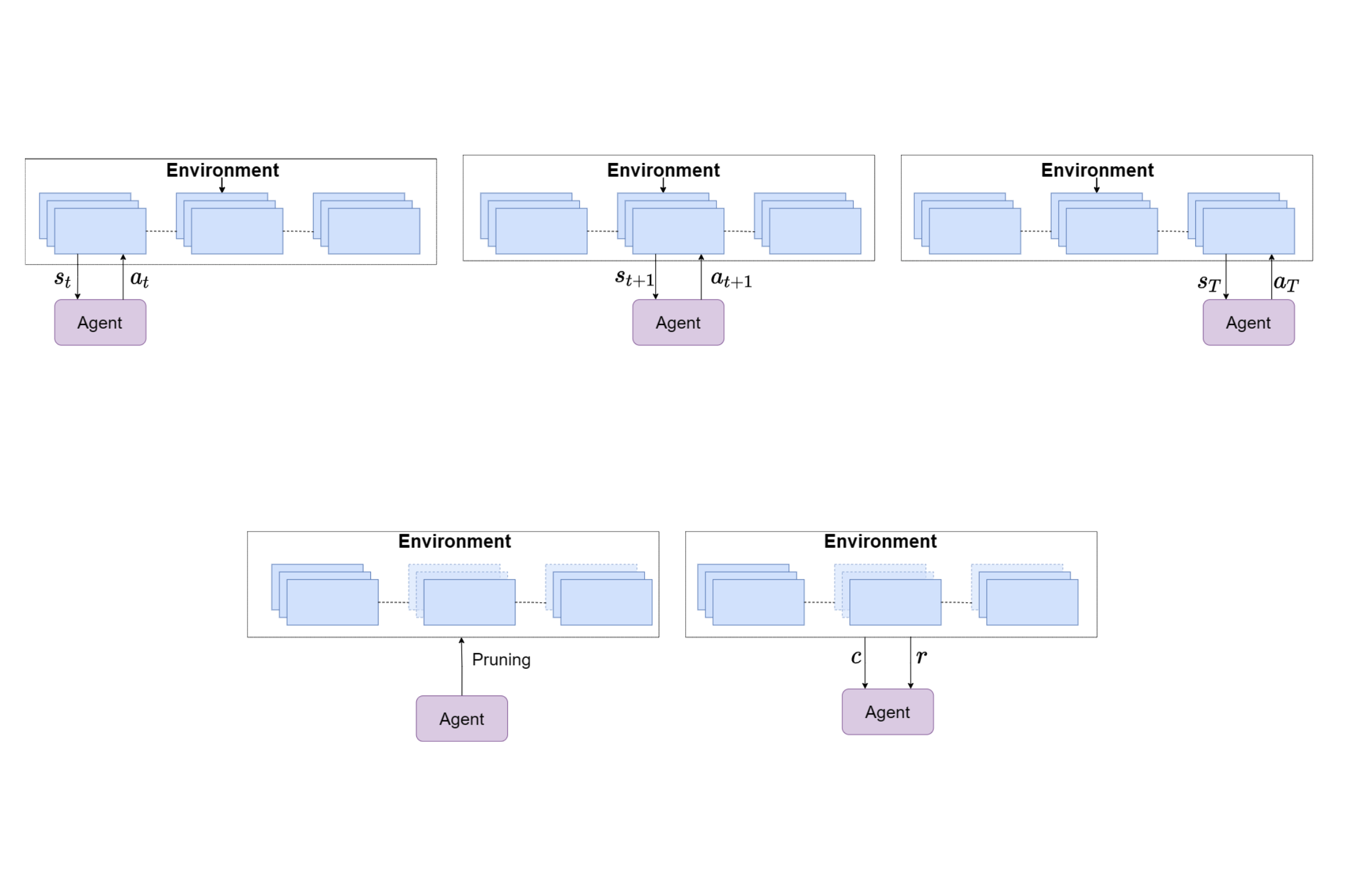

Network pruning reduces the size of neural networks by removing (pruning) neurons such that the performance drop is minimal. Traditional pruning approaches focus on designing metrics to quantify the usefulness of a neuron which is often quite tedious and sub-optimal. More recent approaches have instead focused on training auxiliary networks to automatically learn how useful each neuron is however, they often do not take computational limitations into account. In this work, we propose a general methodology for pruning neural networks. Our proposed methodology can prune neural networks to respect pre-defined computational budgets on arbitrary, possibly non-differentiable, functions. Furthermore, we only assume the ability to be able to evaluate these functions for different inputs, and hence they do not need to be fully specified beforehand. We achieve this by proposing a novel pruning strategy via constrained reinforcement learning algorithms. We prove the effectiveness of our approach via comparison with state-of-the-art methods on standard image classification datasets. Specifically, we reduce $83-92.90\%$ of total parameters on various variants of VGG while achieving comparable or better performance than that of original networks. We also achieved $75.09\%$ reduction in parameters on ResNet18 without incurring any loss in accuracy. The code is also available at our GitHub repository.

Resources

PDF: Paper

Text Reference:

Shehryar Malik, Muhammad Umair Haider, Omer Iqbal, Murtaza Taj, "Neural Network Pruning Through Constrained Reinforcement Learning," 26TH International Conference on Pattern Recognition (ICPR), 2022.

Bibtex Reference:

@INPROCEEDINGS{TajICPR2022,

author={Shehryar Malik, Muhammad Umair Haider*, Omer Iqbal, Murtaza Taj},

booktitle={26TH International Conference on Pattern Recognition (ICPR)},

title={Neural Network Pruning Through Constrained Reinforcement Learning},

year={2022},

}